Quarkus is a framework for building Java applications in times of microservices and serverless architectures. If you compare it with other frameworks like Spring Boot / Spring Cloud or Micronaut, the first difference is native support for running on Kubernetes or Openshift platforms. It is built on top of well-known Java standards like CDI, JAX-RS, and Eclipse MicroProfile which also distinguishes it from Spring Boot or Micronaut. Continue reading “Quick Guide to Microservices with Quarkus on Openshift”

Tag: Minishift

Running Java Microservices on OpenShift using Source-2-Image

One of the reason you would prefer OpenShift instead of Kubernetes is the simplicity of running new applications. When working with plain Kubernetes you need to provide already built image together with the set of descriptor templates used for deploying it. OpenShift introduces Source-2-Image feature used for building reproducible Docker images from application source code. With S2I you don’t have provide any Kubernetes YAML templates or build Docker image by yourself, OpenShift will do it for you. Let’s see how it works. The best way to test it locally is via Minishift. But the first step is to prepare sample applications source code. Continue reading “Running Java Microservices on OpenShift using Source-2-Image”

Integration tests on OpenShift using Arquillian Cube and Istio

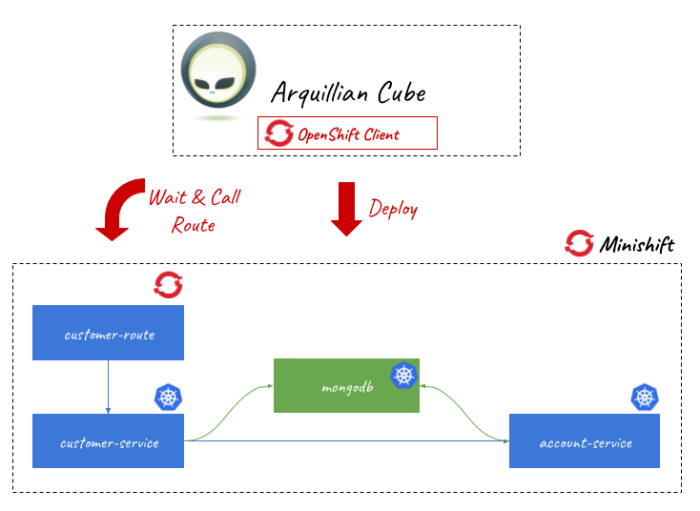

Building integration tests for applications deployed on Kubernetes/OpenShift platforms seems to be quite a big challenge. With Arquillian Cube, an Arquillian extension for managing Docker containers, it is not complicated. Kubernetes extension, being a part of Arquillian Cube, helps you write and run integration tests for your Kubernetes/Openshift application. It is responsible for creating and managing temporary namespace for your tests, applying all Kubernetes resources required to setup your environment and once everything is ready it will just run defined integration tests.

The one very good information related to Arquillian Cube is that it supports Istio framework. You can apply Istio resources before executing tests. One of the most important features of Istio is an ability to control of traffic behavior with rich routing rules, retries, delays, failovers, and fault injection. It allows you to test some unexpected situations during network communication between microservices like server errors or timeouts.

If you would like to run some tests using Istio resources on Minishift you should first install it on your platform. To do that you need to change some privileges for your OpenShift user. Let’s do that.

1. Enabling Istio on Minishift

Istio requires some high-level privileges to be able to run on OpenShift. To add those privileges to the current user we need to login as an user with cluster admin role. First, we should enable admin-user addon on Minishift by executing the following command.

$ minishift addons enable admin-user

After that you would be able to login as system:admin user, which has cluster-admin role. With this user you can also add cluster-admin role to other users, for example admin. Let’s do that.

$ oc login -u system:admin $ oc adm policy add-cluster-role-to-user cluster-admin admin $ oc login -u admin -p admin

Now, let’s create new project dedicated especially for Istio and then add some required privileges.

$ oc new-project istio-system $ oc adm policy add-scc-to-user anyuid -z istio-ingress-service-account -n istio-system $ oc adm policy add-scc-to-user anyuid -z default -n istio-system $ oc adm policy add-scc-to-user anyuid -z prometheus -n istio-system $ oc adm policy add-scc-to-user anyuid -z istio-egressgateway-service-account -n istio-system $ oc adm policy add-scc-to-user anyuid -z istio-citadel-service-account -n istio-system $ oc adm policy add-scc-to-user anyuid -z istio-ingressgateway-service-account -n istio-system $ oc adm policy add-scc-to-user anyuid -z istio-cleanup-old-ca-service-account -n istio-system $ oc adm policy add-scc-to-user anyuid -z istio-mixer-post-install-account -n istio-system $ oc adm policy add-scc-to-user anyuid -z istio-mixer-service-account -n istio-system $ oc adm policy add-scc-to-user anyuid -z istio-pilot-service-account -n istio-system $ oc adm policy add-scc-to-user anyuid -z istio-sidecar-injector-service-account -n istio-system $ oc adm policy add-scc-to-user anyuid -z istio-galley-service-account -n istio-system $ oc adm policy add-scc-to-user privileged -z default -n myproject

Finally, we may proceed to Istio components installation. I downloaded the current newest version of Istio – 1.0.1. Installation file is available under install/kubernetes directory. You just have to apply it to your Minishift instance by calling oc apply command.

$ oc apply -f install/kubernetes/istio-demo.yaml

2. Enabling Istio for Arquillian Cube

I have already described how to use Arquillian Cube to run tests with OpenShift in the article Testing microservices on OpenShift using Arquillian Cube. In comparison with the sample described in that article we need to include dependency responsible for enabling Istio features.

<dependency> <groupId>org.arquillian.cube</groupId> <artifactId>arquillian-cube-istio-kubernetes</artifactId> <version>1.17.1</version> <scope>test</scope> </dependency>

Now, we can use @IstioResource annotation to apply Istio resources into OpenShift cluster or IstioAssistant bean to be able to use some additional methods for adding, removing resources programmatically or polling an availability of URLs.

Let’s take a look on the following JUnit test class using Arquillian Cube with Istio support. In addition to the standard test created for running on OpenShift instance I have added Istio resource file customer-to-account-route.yaml. Then I have invoked method await provided by IstioAssistant. First test test1CustomerRoute creates new customer, so it needs to wait until customer-route is deployed on OpenShift. The next test test2AccountRoute adds account for the newly created customer, so it needs to wait until account-route is deployed on OpenShift. Finally, the test test3GetCustomerWithAccounts is ran, which calls the method responsible for finding customer by id with list of accounts. In that case customer-service calls method endpoint by account-service. As you have probably find out the last line of that test method contains an assertion to empty list of accounts: Assert.assertTrue(c.getAccounts().isEmpty()). Why? We will simulate the timeout in communication between customer-service and account-service using Istio rules.

@Category(RequiresOpenshift.class)

@RequiresOpenshift

@Templates(templates = {

@Template(url = "classpath:account-deployment.yaml"),

@Template(url = "classpath:deployment.yaml")

})

@RunWith(ArquillianConditionalRunner.class)

@IstioResource("classpath:customer-to-account-route.yaml")

@FixMethodOrder(MethodSorters.NAME_ASCENDING)

public class IstioRuleTest {

private static final Logger LOGGER = LoggerFactory.getLogger(IstioRuleTest.class);

private static String id;

@ArquillianResource

private IstioAssistant istioAssistant;

@RouteURL(value = "customer-route", path = "/customer")

private URL customerUrl;

@RouteURL(value = "account-route", path = "/account")

private URL accountUrl;

@Test

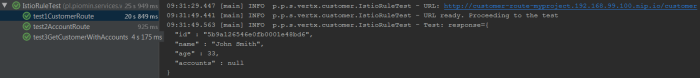

public void test1CustomerRoute() {

LOGGER.info("URL: {}", customerUrl);

istioAssistant.await(customerUrl, r -> r.isSuccessful());

LOGGER.info("URL ready. Proceeding to the test");

OkHttpClient httpClient = new OkHttpClient();

RequestBody body = RequestBody.create(MediaType.parse("application/json"), "{\"name\":\"John Smith\", \"age\":33}");

Request request = new Request.Builder().url(customerUrl).post(body).build();

try {

Response response = httpClient.newCall(request).execute();

ResponseBody b = response.body();

String json = b.string();

LOGGER.info("Test: response={}", json);

Assert.assertNotNull(b);

Assert.assertEquals(200, response.code());

Customer c = Json.decodeValue(json, Customer.class);

this.id = c.getId();

} catch (IOException e) {

e.printStackTrace();

}

}

@Test

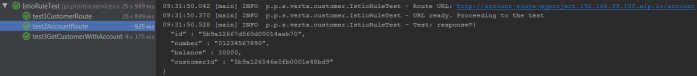

public void test2AccountRoute() {

LOGGER.info("Route URL: {}", accountUrl);

istioAssistant.await(accountUrl, r -> r.isSuccessful());

LOGGER.info("URL ready. Proceeding to the test");

OkHttpClient httpClient = new OkHttpClient();

RequestBody body = RequestBody.create(MediaType.parse("application/json"), "{\"number\":\"01234567890\", \"balance\":10000, \"customerId\":\"" + this.id + "\"}");

Request request = new Request.Builder().url(accountUrl).post(body).build();

try {

Response response = httpClient.newCall(request).execute();

ResponseBody b = response.body();

String json = b.string();

LOGGER.info("Test: response={}", json);

Assert.assertNotNull(b);

Assert.assertEquals(200, response.code());

} catch (IOException e) {

e.printStackTrace();

}

}

@Test

public void test3GetCustomerWithAccounts() {

String url = customerUrl + "/" + id;

LOGGER.info("Calling URL: {}", customerUrl);

OkHttpClient httpClient = new OkHttpClient();

Request request = new Request.Builder().url(url).get().build();

try {

Response response = httpClient.newCall(request).execute();

String json = response.body().string();

LOGGER.info("Test: response={}", json);

Assert.assertNotNull(response.body());

Assert.assertEquals(200, response.code());

Customer c = Json.decodeValue(json, Customer.class);

Assert.assertTrue(c.getAccounts().isEmpty());

} catch (IOException e) {

e.printStackTrace();

}

}

}

3. Creating Istio rules

On of the interesting features provided by Istio is an availability of injecting faults to the route rules. we can specify one or more faults to inject while forwarding HTTP requests to the rule’s corresponding request destination. The faults can be either delays or aborts. We can define a percentage level of error using percent field for the both types of fault. In the following Istio resource I have defines 2 seconds delay for every single request sent to account-service.

apiVersion: networking.istio.io/v1alpha3

kind: VirtualService

metadata:

name: account-service

spec:

hosts:

- account-service

http:

- fault:

delay:

fixedDelay: 2s

percent: 100

route:

- destination:

host: account-service

subset: v1

Besides VirtualService we also need to define DestinationRule for account-service. It is really simple – we have just define version label of the target service.

apiVersion: networking.istio.io/v1alpha3

kind: DestinationRule

metadata:

name: account-service

spec:

host: account-service

subsets:

- name: v1

labels:

version: v1

Before running the test we should also modify OpenShift deployment templates of our sample applications. We need to inject some Istio resources into the pods definition using istioctl kube-inject command as shown below.

$ istioctl kube-inject -f deployment.yaml -o customer-deployment-istio.yaml $ istioctl kube-inject -f account-deployment.yaml -o account-deployment-istio.yaml

Finally, we may rewrite generated files into OpenShift templates. Here’s the fragment of Openshift template containing DeploymentConfig definition for account-service.

kind: Template

apiVersion: v1

metadata:

name: account-template

objects:

- kind: DeploymentConfig

apiVersion: v1

metadata:

name: account-service

labels:

app: account-service

name: account-service

version: v1

spec:

template:

metadata:

annotations:

sidecar.istio.io/status: '{"version":"364ad47b562167c46c2d316a42629e370940f3c05a9b99ccfe04d9f2bf5af84d","initContainers":["istio-init"],"containers":["istio-proxy"],"volumes":["istio-envoy","istio-certs"],"imagePullSecrets":null}'

name: account-service

labels:

app: account-service

name: account-service

version: v1

spec:

containers:

- env:

- name: DATABASE_NAME

valueFrom:

secretKeyRef:

key: database-name

name: mongodb

- name: DATABASE_USER

valueFrom:

secretKeyRef:

key: database-user

name: mongodb

- name: DATABASE_PASSWORD

valueFrom:

secretKeyRef:

key: database-password

name: mongodb

image: piomin/account-vertx-service

name: account-vertx-service

ports:

- containerPort: 8095

resources: {}

- args:

- proxy

- sidecar

- --configPath

- /etc/istio/proxy

- --binaryPath

- /usr/local/bin/envoy

- --serviceCluster

- account-service

- --drainDuration

- 45s

- --parentShutdownDuration

- 1m0s

- --discoveryAddress

- istio-pilot.istio-system:15007

- --discoveryRefreshDelay

- 1s

- --zipkinAddress

- zipkin.istio-system:9411

- --connectTimeout

- 10s

- --statsdUdpAddress

- istio-statsd-prom-bridge.istio-system:9125

- --proxyAdminPort

- "15000"

- --controlPlaneAuthPolicy

- NONE

env:

- name: POD_NAME

valueFrom:

fieldRef:

fieldPath: metadata.name

- name: POD_NAMESPACE

valueFrom:

fieldRef:

fieldPath: metadata.namespace

- name: INSTANCE_IP

valueFrom:

fieldRef:

fieldPath: status.podIP

- name: ISTIO_META_POD_NAME

valueFrom:

fieldRef:

fieldPath: metadata.name

- name: ISTIO_META_INTERCEPTION_MODE

value: REDIRECT

image: gcr.io/istio-release/proxyv2:1.0.1

imagePullPolicy: IfNotPresent

name: istio-proxy

resources:

requests:

cpu: 10m

securityContext:

readOnlyRootFilesystem: true

runAsUser: 1337

volumeMounts:

- mountPath: /etc/istio/proxy

name: istio-envoy

- mountPath: /etc/certs/

name: istio-certs

readOnly: true

initContainers:

- args:

- -p

- "15001"

- -u

- "1337"

- -m

- REDIRECT

- -i

- '*'

- -x

- ""

- -b

- 8095,

- -d

- ""

image: gcr.io/istio-release/proxy_init:1.0.1

imagePullPolicy: IfNotPresent

name: istio-init

resources: {}

securityContext:

capabilities:

add:

- NET_ADMIN

volumes:

- emptyDir:

medium: Memory

name: istio-envoy

- name: istio-certs

secret:

optional: true

secretName: istio.default

4. Building applications

The sample applications are implemented using Eclipse Vert.x framework. They use Mongo database for storing data. The connection settings are injected into pods using Kubernetes Secrets.

public class MongoVerticle extends AbstractVerticle {

private static final Logger LOGGER = LoggerFactory.getLogger(MongoVerticle.class);

@Override

public void start() throws Exception {

ConfigStoreOptions envStore = new ConfigStoreOptions()

.setType("env")

.setConfig(new JsonObject().put("keys", new JsonArray().add("DATABASE_USER").add("DATABASE_PASSWORD").add("DATABASE_NAME")));

ConfigRetrieverOptions options = new ConfigRetrieverOptions().addStore(envStore);

ConfigRetriever retriever = ConfigRetriever.create(vertx, options);

retriever.getConfig(r -> {

String user = r.result().getString("DATABASE_USER");

String password = r.result().getString("DATABASE_PASSWORD");

String db = r.result().getString("DATABASE_NAME");

JsonObject config = new JsonObject();

LOGGER.info("Connecting {} using {}/{}", db, user, password);

config.put("connection_string", "mongodb://" + user + ":" + password + "@mongodb/" + db);

final MongoClient client = MongoClient.createShared(vertx, config);

final CustomerRepository service = new CustomerRepositoryImpl(client);

ProxyHelper.registerService(CustomerRepository.class, vertx, service, "customer-service");

});

}

}

MongoDB should be started on OpenShift before starting any applications, which connect to it. To achieve it we should insert Mongo deployment resource into Arquillian configuration file as env.config.resource.name field.

The configuration of Arquillian Cube is visible below. We will use an existing namespace myproject, which has already granted the required privileges (see Step 1). We also need to pass authentication token of user admin. You can collect it using command oc whoami -t after login to OpenShift cluster.

<extension qualifier="openshift"> <property name="namespace.use.current">true</property> <property name="namespace.use.existing">myproject</property> <property name="kubernetes.master">https://192.168.99.100:8443</property> <property name="cube.auth.token">TYYccw6pfn7TXtH8bwhCyl2tppp5MBGq7UXenuZ0fZA</property> <property name="env.config.resource.name">mongo-deployment.yaml</property> </extension>

The communication between customer-service and account-service is realized by Vert.x WebClient. We will set read timeout for the client to 1 second. Because Istio injects 2 seconds delay into the route, the communication is going to end with timeout.

public class AccountClient {

private static final Logger LOGGER = LoggerFactory.getLogger(AccountClient.class);

private Vertx vertx;

public AccountClient(Vertx vertx) {

this.vertx = vertx;

}

public AccountClient findCustomerAccounts(String customerId, Handler<AsyncResult<List>> resultHandler) {

WebClient client = WebClient.create(vertx);

client.get(8095, "account-service", "/account/customer/" + customerId).timeout(1000).send(res2 -> {

if (res2.succeeded()) {

LOGGER.info("Response: {}", res2.result().bodyAsString());

List accounts = res2.result().bodyAsJsonArray().stream().map(it -> Json.decodeValue(it.toString(), Account.class)).collect(Collectors.toList());

resultHandler.handle(Future.succeededFuture(accounts));

} else {

resultHandler.handle(Future.succeededFuture(new ArrayList()));

}

});

return this;

}

}

The full code of sample applications is available on GitHub in the repository https://github.com/piomin/sample-vertx-kubernetes/tree/openshift-istio-tests.

5. Running tests

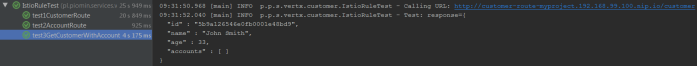

You can the tests during Maven build or just using your IDE. As the first test1CustomerRoute test is executed. It adds new customer and save generated id for two next tests.

The next test is test2AccountRoute. It adds an account for the customer created during previous test.

Finally, the test responsible for verifying communication between microservices is running. It verifies if the list of accounts is empty, what is a result of timeout in communication with account-service.

Testing microservices on OpenShift using Arquillian Cube

I had a touch with Arquillian framework for the first time when I was building the automated end-to-end tests for JavaEE based applications. At that time testing applications deployed on JavaEE servers was not very comfortable. Arquillian came with nice solution for that problem. It has been providing useful mechanisms for testing EJBs deployed on an embedded application server.

Currently, Arquillian provides multiple modules dedicated for different technologies and use cases. One of these modules is Arquillian Cube. With this extension you can create integration/functional tests running on Docker containers or even more advanced orchestration platforms like Kubernetes or OpenShift.

In this article I’m going to show you how to use Arquillian Cube for building integration tests for applications running on OpenShift platform. All the examples would be deployed locally on Minishift. Here’s the full list of topics covered in this article:

- Using Arquillian Cube for deploying, and running applications on Minishift

- Testing applications deployed on Minishift by calling their REST API exposed using OpenShift routes

- Testing inter-service communication between deployed applications basing on Kubernetes services

Before reading this article it is worth to consider reading two of my previous articles about Kubernetes and OpenShift:

- Running Vert.x Microservices on Kubernetes/OpenShift – describes how to run Vert.x microservices on Minishift, integrate them with Mongo database, and provide inter-service communication between them

- Quick guide to deploying Java apps on OpenShift – describes further steps of running Minishift and deploying applications on that platform

The following picture illustrates the architecture of currently discussed solution. We will build and deploy two sample applications on Minishift. They integrate with NoSQL database, which is also ran as a service on OpenShift platform.

Now, we may proceed to the development.

1. Including Arquillian Cube dependencies

Before including dependencies to Arquillian Cube libraries we should define dependency management section in our pom.xml. It should contain BOM of Arquillian framework and also of its Cube extension.

<dependencyManagement>

<dependencies>

<dependency>

<groupId>org.arquillian.cube</groupId>

<artifactId>arquillian-cube-bom</artifactId>

<version>1.15.3</version>

<scope>import</scope>

<type>pom</type>

</dependency>

<dependency>

<groupId>org.jboss.arquillian</groupId>

<artifactId>arquillian-bom</artifactId>

<version>1.4.0.Final</version>

<scope>import</scope>

<type>pom</type>

</dependency>

</dependencies>

</dependencyManagement>

Here’s the list of libraries used in my sample project. The most important thing is to include starter for Arquillian Cube OpenShift extension, which contains all required dependencies. It is also worth to include arquillian-cube-requirement artifact if you would like to annotate test class with @RunWith(ArquillianConditionalRunner.class), and openshift-client in case you would like to use Fabric8 OpenShiftClient.

<dependency>

<groupId>org.jboss.arquillian.junit</groupId>

<artifactId>arquillian-junit-container</artifactId>

<version>1.4.0.Final</version>

<scope>test</scope>

</dependency>

<dependency>

<groupId>org.arquillian.cube</groupId>

<artifactId>arquillian-cube-requirement</artifactId>

<scope>test</scope>

</dependency>

<dependency>

<groupId>org.arquillian.cube</groupId>

<artifactId>arquillian-cube-openshift-starter</artifactId>

<scope>test</scope>

</dependency>

<dependency>

<groupId>io.fabric8</groupId>

<artifactId>openshift-client</artifactId>

<version>3.1.12</version>

<scope>test</scope>

</dependency>

2. Running Minishift

I gave you a detailed instruction how to run Minishift locally in my previous articles about OpenShift. Here’s the full list of commands that should be executed in order to start Minishift, reuse Docker daemon managed by Minishift and create test namespace (project).

$ minishift start --vm-driver=virtualbox --memory=2G $ minishift docker-env $ minishift oc-env $ oc login -u developer -p developer $ oc new-project sample-deployment

We also have to create Mongo database service on OpenShift. OpenShift platform provides an easily way of deploying built-in services via web console available at https://192.168.99.100:8443. You can select there the required service on main dashboard, and just confirm the installation using default properties. Otherwise, you would have to provide YAML template with deployment configuration, and apply it to Minishift using oc command. YAML file will be also required if you decide to recreate namespace on every single test case (explained in the subsequent text in Step 3). I won’t paste here content of the template with configuration for creating MongoDB service on Minishift. This file is available in my GitHub repository in the /openshift/mongo-deployment.yaml file. To access that file you need to clone repository sample-vertx-kubernetes and switch to branch openshift (https://github.com/piomin/sample-vertx-kubernetes/tree/openshift-tests). It contains definitions of secret, persistentVolumeClaim, deploymentConfig and service.

3. Configuring connection with Minishift for Arquillian

All the Arquillian configuration settings should be provided in arquillian.xml file located in src/test/resources directory. When running Arquillian tests on Minishift you generally have two approaches that may be applied. You can create new namespace per every test suite and then remove it after the test or just use the existing one, and then remove all the created components within the selected namespace. First approach is set by default for every test until you modify it inside Arquillian configuration file using namespace.use.existing and namespace.use.current properties.

<extension qualifier="openshift"> <property name="namespace.use.current">true</property> <property name="namespace.use.existing">sample-deployment</property> <property name="kubernetes.master">https://192.168.99.100:8443</property> <property name="cube.auth.token">EMNHP8QIB4A_VU4kE_vQv8k9he_4AV3GTltrzd06yMU</property> </extension>

You also have to set Kubernetes master address and API token. In order to obtain token just run the following command.

$ oc whoami -t EMNHP8QIB4A_VU4kE_vQv8k9he_4AV3GTltrzd06yMU

4. Building Arquillian JUnit test

Every JUnit test class should be annotated with @RequiresOpenshift. It should also have runner set. In this case it is ArquillianConditionalRunner. The test method testCustomerRoute applies the configuration passed inside file deployment.yaml, which is assigned to the method using @Template annotation.

The important part of this unit test is route’s URL declaration. We have to annotate it with the following annotation:

@RouteURL– it searches for a route with a name defined usingvalueparameter and inject it into URL object instance@AwaitRoute– if you do not declare this annotation the test will finish just after running, because deployment on OpenShift is processed asynchronously.@AwaitRoutewill force test to wait until route is available on Minishift. We can set the timeout of waiting for route (in this case it is 2 minutes) and route’s path. Especially route’s path is very important here, without it our test won’t locate the route and finished with 2 minutes timeout.

The test method is very simple. In fact, I only send POST request with JSON object to the endpoint assigned to the customer-route route and verify if HTTP status code is 200. Because I had a problem with injecting route’s URL (in fact it doesn’t work for my sample with Minishift v3.9.0, while it works with Minishift v3.7.1) I needed to prepare it manually in the code. If it works properly we could use URL url instance for that.

@Category(RequiresOpenshift.class)

@RequiresOpenshift

@RunWith(ArquillianConditionalRunner.class)

public class CustomerServiceApiTest {

private static final Logger LOGGER = LoggerFactory.getLogger(CustomerServiceApiTest.class);

@ArquillianResource

OpenShiftAssistant assistant;

@ArquillianResource

OpenShiftClient client;

@RouteURL(value = "customer-route")

@AwaitRoute(timeoutUnit = TimeUnit.MINUTES, timeout = 2, path = "/customer")

private URL url;

@Test

@Template(url = "classpath:deployment.yaml")

public void testCustomerRoute() {

OkHttpClient httpClient = new OkHttpClient();

RequestBody body = RequestBody.create(MediaType.parse("application/json"), "{\"name\":\"John Smith\", \"age\":33}");

Request request = new Request.Builder().url("http://customer-route-sample-deployment.192.168.99.100.nip.io/customer").post(body).build();

try {

Response response = httpClient.newCall(request).execute();

LOGGER.info("Test: response={}", response.body().string());

Assert.assertNotNull(response.body());

Assert.assertEquals(200, response.code());

} catch (IOException e) {

e.printStackTrace();

}

}

}

5. Preparing deployment configuration

Before running the test we have to prepare template with configuration, which is loaded by Arquillian Cube using @Template annotation. We need to create deploymentConfig, inject there MongoDB credentials stored in secret object, and finally expose the service outside container using route object.

kind: Template

apiVersion: v1

metadata:

name: customer-template

objects:

- kind: ImageStream

apiVersion: v1

metadata:

name: customer-image

spec:

dockerImageRepository: piomin/customer-vertx-service

- kind: DeploymentConfig

apiVersion: v1

metadata:

name: customer-service

spec:

template:

metadata:

labels:

name: customer-service

spec:

containers:

- name: customer-vertx-service

image: piomin/customer-vertx-service

ports:

- containerPort: 8090

protocol: TCP

env:

- name: DATABASE_USER

valueFrom:

secretKeyRef:

key: database-user

name: mongodb

- name: DATABASE_PASSWORD

valueFrom:

secretKeyRef:

key: database-password

name: mongodb

- name: DATABASE_NAME

valueFrom:

secretKeyRef:

key: database-name

name: mongodb

replicas: 1

triggers:

- type: ConfigChange

- type: ImageChange

imageChangeParams:

automatic: true

containerNames:

- customer-vertx-service

from:

kind: ImageStreamTag

name: customer-image:latest

strategy:

type: Rolling

paused: false

revisionHistoryLimit: 2

minReadySeconds: 0

- kind: Service

apiVersion: v1

metadata:

name: customer-service

spec:

ports:

- name: "web"

port: 8090

targetPort: 8090

selector:

name: customer-service

- kind: Route

apiVersion: v1

metadata:

name: customer-route

spec:

path: "/customer"

to:

kind: Service

name: customer-service

6. Testing inter-service communication

In the sample project the communication with other microservices is realized by Vert.x WebClient. It takes Kubernetes service name and its container port as parameters. It is implemented inside customer-service by AccountClient, which is then invoked inside Vert.x HTTP route implementation. Here’s AccountClient implementation.

public class AccountClient {

private static final Logger LOGGER = LoggerFactory.getLogger(AccountClient.class);

private Vertx vertx;

public AccountClient(Vertx vertx) {

this.vertx = vertx;

}

public AccountClient findCustomerAccounts(String customerId, Handler<AsyncResult<List>> resultHandler) {

WebClient client = WebClient.create(vertx);

client.get(8095, "account-service", "/account/customer/" + customerId).send(res2 -> {

LOGGER.info("Response: {}", res2.result().bodyAsString());

List accounts = res2.result().bodyAsJsonArray().stream().map(it -> Json.decodeValue(it.toString(), Account.class)).collect(Collectors.toList());

resultHandler.handle(Future.succeededFuture(accounts));

});

return this;

}

}

Endpoint GET /account/customer/:customerId exposed by account-service is called within implementation of method GET /customer/:id exposed by customer-service. This time we create new namespace instead using the existing one. That’s why we have to apply MongoDB deployment configuration before applying configuration of sample services. We also need to upload configuration of account-service that is provided inside account-deployment.yaml file. The rest part of JUnit test is pretty similar to the test described in Step 4. It waits until customer-route is available on Minishift. The only differences are in calling URL and dynamic injection of namespace into route’s URL.

@Category(RequiresOpenshift.class)

@RequiresOpenshift

@RunWith(ArquillianConditionalRunner.class)

@Templates(templates = {

@Template(url = "classpath:mongo-deployment.yaml"),

@Template(url = "classpath:deployment.yaml"),

@Template(url = "classpath:account-deployment.yaml")

})

public class CustomerCommunicationTest {

private static final Logger LOGGER = LoggerFactory.getLogger(CustomerCommunicationTest.class);

@ArquillianResource

OpenShiftAssistant assistant;

String id;

@RouteURL(value = "customer-route")

@AwaitRoute(timeoutUnit = TimeUnit.MINUTES, timeout = 2, path = "/customer")

private URL url;

// ...

@Test

public void testGetCustomerWithAccounts() {

LOGGER.info("Route URL: {}", url);

String projectName = assistant.getCurrentProjectName();

OkHttpClient httpClient = new OkHttpClient();

Request request = new Request.Builder().url("http://customer-route-" + projectName + ".192.168.99.100.nip.io/customer/" + id).get().build();

try {

Response response = httpClient.newCall(request).execute();

LOGGER.info("Test: response={}", response.body().string());

Assert.assertNotNull(response.body());

Assert.assertEquals(200, response.code());

} catch (IOException e) {

e.printStackTrace();

}

}

}

You can run the test using your IDE or just by executing command mvn clean install.

Conclusion

Arquillian Cube comes with gentle solution for integration testing over Kubernetes and OpenShift platforms. It is not difficult to prepare and upload configuration with database and microservices and then deploy it on OpenShift node. You can event test communication between microservices just by deploying dependent application with OpenShift template.

Quick guide to deploying Java apps on OpenShift

In this article I’m going to show you how to deploy your applications on OpenShift (Minishift), connect them with other services exposed there or use some other interesting deployment features provided by OpenShift. Openshift is built on top of Docker containers and the Kubernetes container cluster orchestrator. Currently, it is the most popular enterprise platform basing on those two technologies, so it is definitely worth examining it in more details.

1. Running Minishift

We use Minishift to run a single-node OpenShift cluster on the local machine. The only prerequirement before installing MiniShift is the necessity to have a virtualization tool installed. I use Oracle VirtualBox as a hypervisor, so I should set --vm-driver parameter to virtualbox in my running command.

$ minishift start --vm-driver=virtualbox --memory=3G

2. Running Docker

It turns out that you can easily reuse the Docker daemon managed by Minishift, in order to be able to run Docker commands directly from your command line, without any additional installations. To achieve this just run the following command after starting Minishift.

@FOR /f "tokens=* delims=^L" %i IN ('minishift docker-env') DO @call %i

3. Running OpenShift CLI

The last tool, that is required before starting any practical exercise with Minishift is CLI. CLI is available under command oc. To enable it on your command-line run the following commands.

$ minishift oc-env

$ SET PATH=C:\Users\minkowp\.minishift\cache\oc\v3.9.0\windows;%PATH%

$ REM @FOR /f "tokens=*" %i IN ('minishift oc-env') DO @call %i

Alternatively you can use OpenShift web console which is available under port 8443. On my Windows machine it is by default launched under address 192.168.99.100.

4. Building Docker images of the sample applications

I prepared the two sample applications that are used for the purposes of presenting OpenShift deployment process. These are simple Java, Vert.x applications that provide HTTP API and store data in MongoDB. However, a technology is not very important now. We need to build Docker images with these applications. The source code is available on GitHub (https://github.com/piomin/sample-vertx-kubernetes.git) in branch openshift (https://github.com/piomin/sample-vertx-kubernetes/tree/openshift). Here’s sample Dockerfile for account-vertx-service.

FROM openjdk:8-jre-alpine ENV VERTICLE_FILE account-vertx-service-1.0-SNAPSHOT.jar ENV VERTICLE_HOME /usr/verticles ENV DATABASE_USER mongo ENV DATABASE_PASSWORD mongo ENV DATABASE_NAME db EXPOSE 8095 COPY target/$VERTICLE_FILE $VERTICLE_HOME/ WORKDIR $VERTICLE_HOME ENTRYPOINT ["sh", "-c"] CMD ["exec java -jar $VERTICLE_FILE"]

Go to account-vertx-service directory and run the following command to build image from a Dockerfile visible above.

$ docker build -t piomin/account-vertx-service .

The same step should be performed for customer-vertx-service. After it you have two images built, both in the same version latest, which now can be deployed and ran on Minishift.

5. Preparing OpenShift deployment descriptor

When working with OpenShift, the first step of application’s deployment is to create YAML configuration file. This file contains basic information about deployment like containers used for running applications (1), scaling (2), triggers that drive automated deployments in response to events (3) or a strategy of deploying your pods on the platform (4).

kind: "DeploymentConfig"

apiVersion: "v1"

metadata:

name: "account-service"

spec:

template:

metadata:

labels:

name: "account-service"

spec:

containers: # (1)

- name: "account-vertx-service"

image: "piomin/account-vertx-service:latest"

ports:

- containerPort: 8095

protocol: "TCP"

replicas: 1 # (2)

triggers: # (3)

- type: "ConfigChange"

- type: "ImageChange"

imageChangeParams:

automatic: true

containerNames:

- "account-vertx-service"

from:

kind: "ImageStreamTag"

name: "account-vertx-service:latest"

strategy: # (4)

type: "Rolling"

paused: false

revisionHistoryLimit: 2

Deployment configurations can be managed with the oc command like any other resource. You can create new configuration or update the existing one by using oc apply command.

$ oc apply -f account-deployment.yaml

You can be surprised a little, but this command does not trigger any build and does not start the pods. In fact, you have only created a resource of type deploymentConfig, which may be describes deployment process. You can start this process using some other oc commands, but first let’s take a closer look on the resources required by our application.

6. Injecting environment variables

As I have mentioned before, our sample applications uses external datasource. They need to open the connection to the existing MongoDB instance in order to store there data passed using HTTP endpoints exposed by the application. Here’s MongoVerticle class, which is responsible for establishing client connection with MongoDB. It uses environment variables for setting security credentials and database name.

public class MongoVerticle extends AbstractVerticle {

@Override

public void start() throws Exception {

ConfigStoreOptions envStore = new ConfigStoreOptions()

.setType("env")

.setConfig(new JsonObject().put("keys", new JsonArray().add("DATABASE_USER").add("DATABASE_PASSWORD").add("DATABASE_NAME")));

ConfigRetrieverOptions options = new ConfigRetrieverOptions().addStore(envStore);

ConfigRetriever retriever = ConfigRetriever.create(vertx, options);

retriever.getConfig(r -> {

String user = r.result().getString("DATABASE_USER");

String password = r.result().getString("DATABASE_PASSWORD");

String db = r.result().getString("DATABASE_NAME");

JsonObject config = new JsonObject();

config.put("connection_string", "mongodb://" + user + ":" + password + "@mongodb/" + db);

final MongoClient client = MongoClient.createShared(vertx, config);

final AccountRepository service = new AccountRepositoryImpl(client);

ProxyHelper.registerService(AccountRepository.class, vertx, service, "account-service");

});

}

}

MongoDB is available in the OpenShift’s catalog of predefined Docker images. You can easily deploy it on your Minishift instance just by clicking “MongoDB” icon in “Catalog” tab. Username and password will be automatically generated if you do not provide them during deployment setup. All the properties are available as deployment’s environment variables and are stored as secrets/mongodb, where mongodb is the name of the deployment.

Environment variables can be easily injected into any other deployment using oc set command, and therefore they are injected into the pod after performing deployment process. The following command inject all secrets assigned to mongodb deployment to the configuration of our sample application’s deployment.

$ oc set env --from=secrets/mongodb dc/account-service

7. Importing Docker images to OpenShift

A deployment configuration is ready. So, in theory we could have start deployment process. However, we have back for a moment to the deployment config defined in the Step 5. We defined there two triggers that causes a new replication controller to be created, what results in deploying new version of pod. First of them is a configuration change trigger that fires whenever changes are detected in the pod template of the deployment configuration (ConfigChange). The second of them, image change trigger (ImageChange) fires when a new version of the Docker image is pushed to the repository. To be able to watch if an image in repository has been changed, we have to define and create image stream. Such an image stream does not contain any image data, but present a single virtual view of related images, something similar to an image repository. Inside deployment config file we referred to image stream account-vertx-service, so the same name should be provided inside image stream definition. In turn, when setting the spec.dockerImageRepository field we define the Docker pull specification for the image.

apiVersion: "v1" kind: "ImageStream" metadata: name: "account-vertx-service" spec: dockerImageRepository: "piomin/account-vertx-service"

Finally, we can create resource on OpenShift platform.

$ oc apply -f account-image.yaml

8. Running deployment

Once a deployment configuration has been prepared, and Docker images has been succesfully imported into repository managed by OpenShift instance, we may trigger the build using the following oc command.

$ oc rollout latest dc/account-service $ oc rollout latest dc/customer-service

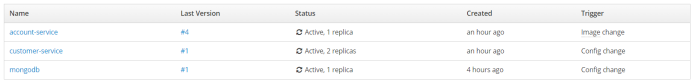

If everything goes fine the new pods should be started for the defined deployments. You can easily check it out using OpenShift web console.

9. Updating image stream

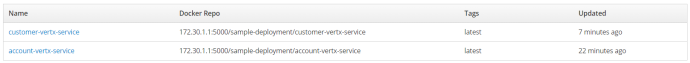

We have already created two image streams related to the Docker repositories. Here’s the screen from OpenShift web console that shows the list of available image streams.

To be able to push a new version of an image to OpenShift internal Docker registry we should first perform docker login against this registry using user’s authentication token. To obtain the token from OpenShift use oc whoami command, and then pass it to your docker login command with -p parameter.

$ oc whoami -t Sz9_TXJQ2nyl4fYogR6freb3b0DGlJ133DVZx7-vMFM $ docker login -u developer -p Sz9_TXJQ2nyl4fYogR6freb3b0DGlJ133DVZx7-vMFM https://172.30.1.1:5000

Now, if you perform any change in your application and rebuild your Docker image with latest tag, you have to push that image to image stream on OpenShift. The address of internal registry has been automatically generated by OpenShift, and you can check it out in the image stream’s details. For me, it is 172.30.1.1:5000.

$ docker tag piomin/account-vertx-service 172.30.1.1:5000/sample-deployment/account-vertx-service:latest $ docker push 172.30.1.1:5000/sample-deployment/account-vertx-service

After pushing new version of Docker image to image stream, a rollout of application is started automatically. Here’s the screen from OpenShift web console that shows the history of account-service application deployments.

Conclusion

I have shown you the further steps of deploying your application on the OpenShift platform. Basing on sample Java application that connects to a database, I illustrated how to inject credentials to that application’s pod entirely transparently for a developer. I also perform an update of application’s Docker image, in order to show how to trigger a new version deployment on image change.