Have you ever tried to run any message broker on Kubernetes? KubeMQ is relatively new solution and is not as popular as competitive tools like RabbitMQ, Kafka or ActiveMQ. However, it has one big advantage over them – it is Kubernetes native message broker, which may be deployed there using a single command without preparing any additional templates or manifests. This convinced me to take a closer look at KubeMQ. Continue reading “Kubernetes Messaging with Java and KubeMQ”

Tag: message broker

Redis in Microservices Architecture

Redis can be widely used in microservices architecture. It is probably one of the few popular software solutions that may be leveraged by your application in such many different ways. Depending on the requirements it can acts as a primary database, cache, message broker. While it is also a key/value store we can use it as a configuration server or discovery server in your microservices architecture. Although it is usually defined as an in-memory data structure, we can also run it in persistent mode.

Today, I’m going to show you some examples of using Redis with microservices built on top of Spring Boot and Spring Cloud frameworks. These application will communicate between each other asynchronously using Redis Pub/Sub, using Redis as a cache or primary database, and finally used Redis as a configuration server. Continue reading “Redis in Microservices Architecture” →

RabbitMQ Cluster with Consul and Vault

Almost two years ago I wrote an article about RabbitMQ clustering RabbitMQ in cluster. It was one of the first post on my blog, and it’s really hard to believe it has been two years since I started this blog. Anyway, one of the question about the topic described in the mentioned article inspired me to return to that subject one more time. That question pointed to the problem of an approach to setting up the cluster. This approach assumes that we are manually attaching new nodes to the cluster by executing the command rabbitmqctl join_cluster with cluster name as a parameter. If I remember correctly it was the only one available method of creating cluster at that time. Today we have more choices, what illustrates an evolution of RabbitMQ during last two years. Continue reading “RabbitMQ Cluster with Consul and Vault” →

Building and testing message-driven microservices using Spring Cloud Stream

Spring Boot and Spring Cloud give you a great opportunity to build microservices fast using different styles of communication. You can create synchronous REST microservices based on Spring Cloud Netflix libraries as shown in one of my previous articles Quick Guide to Microservices with Spring Boot 2.0, Eureka and Spring Cloud. You can create asynchronous, reactive microservices deployed on Netty with Spring WebFlux project and combine it succesfully with some Spring Cloud libraries as shown in my article Reactive Microservices with Spring WebFlux and Spring Cloud. And finally, you may implement message-driven microservices based on publish/subscribe model using Spring Cloud Stream and message broker like Apache Kafka or RabbitMQ. The last of listed approaches to building microservices is the main subject of this article. I’m going to show you how to effectively build, scale, run and test messaging microservices basing on RabbitMQ broker.

Architecture

For the purpose of demonstrating Spring Cloud Stream features we will design a sample system which uses publish/subscribe model for inter-service communication. We have three microservices: order-service, product-service and account-service. Application order-service exposes HTTP endpoint that is responsible for processing orders sent to our system. All the incoming orders are processed asynchronously – order-service prepare and send message to RabbitMQ exchange and then respond to the calling client that the request has been accepted for processing. Applications account-service and product-service are listening for the order messages incoming to the exchange. Microservice account-service is responsible for checking if there are sufficient funds on customer’s account for order realization and then withdrawing cash from this account. Microservice product-service checks if there is sufficient amount of products in the store, and changes the number of available products after processing order. Both account-service and product-service send asynchronous response through RabbitMQ exchange (this time it is one-to-one communication using direct exchange) with a status of operation. Microservice order-service after receiving response messages sets the appropriate status of the order and exposes it through REST endpoint GET /order/{id} to the external client.

If you feel that the description of our sample system is a little incomprehensible, here’s the diagram with architecture for clarification.

Enabling Spring Cloud Stream

The recommended way to include Spring Cloud Stream in the project is with a dependency management system. Spring Cloud Stream has an independent release trains management in relation to the whole Spring Cloud framework. However, if we have declared spring-cloud-dependencies in the Elmhurst.RELEASE version inside the dependencyManagement

section, we wouldn’t have to declare anything else in pom.xml. If you prefer to use only the Spring Cloud Stream project, you should define the following section.

<dependencyManagement>

<dependencies>

<dependency>

<groupId>org.springframework.cloud</groupId>

<artifactId>spring-cloud-stream-dependencies</artifactId>

<version>Elmhurst.RELEASE</version>

<type>pom</type>

<scope>import</scope>

</dependency>

</dependencies>

</dependencyManagement>

The next step is to add spring-cloud-stream artifact to the project dependencies. I also recommend you include at least the spring-cloud-sleuth library to provide sending messaging with the same traceId as the source request incoming to order-service.

<dependency> <groupId>org.springframework.cloud</groupId> <artifactId>spring-cloud-stream</artifactId> </dependency> <dependency> <groupId>org.springframework.cloud</groupId> <artifactId>spring-cloud-sleuth</artifactId> </dependency>

Spring Cloud Stream programming model

To enable connectivity to a message broker for your application, annotate the main class with @EnableBinding. The @EnableBinding annotation takes one or more interfaces as parameters. You may choose between three interfaces provided by Spring Cloud Stream:

- Sink: This is used for marking a service that receives messages from the inbound channel.

- Source: This is used for sending messages to the outbound channel.

- Processor: This can be used in case you need both an inbound channel and an outbound channel, as it extends the Source and Sink interfaces. Because

order-servicesends messages, as well as receives them, its main class has been annotated with@EnableBinding(Processor.class).

Here’s the main class of order-service that enables Spring Cloud Stream binding.

@SpringBootApplication

@EnableBinding(Processor.class)

public class OrderApplication {

public static void main(String[] args) {

new SpringApplicationBuilder(OrderApplication.class).web(true).run(args);

}

}

Adding message broker

In Spring Cloud Stream nomenclature the implementation responsible for integration with specific message broker is called binder. By default, Spring Cloud Stream provides binder implementations for Kafka and RabbitMQ. It is able to automatically detect and use a binder found on the classpath. Any middleware-specific settings can be overridden through external configuration properties in the form supported by Spring Boot, such as application arguments, environment variables, or just the application.yml file. To include support for RabbitMQ, which used it this article as a message broker, you should add the following dependency to the project.

<dependency> <groupId>org.springframework.cloud</groupId> <artifactId>spring-cloud-starter-stream-rabbit</artifactId> </dependency>

Now, our applications need to connected with one, shared instance of RabbitMQ broker. That’s why I run Docker image with RabbitMQ exposed outside on default 5672 port. It also launches web dashboard available under address http://192.168.99.100:15672.

$ docker run -d --name rabbit -p 15672:15672 -p 5672:5672 rabbitmq:management

We need to override default address of RabbitMQ for every Spring Boot application by settings property spring.rabbitmq.host to Docker machine IP 192.168.99.100.

spring:

rabbitmq:

host: 192.168.99.100

port: 5672

Implementing message-driven microservices

Spring Cloud Stream is built on top of Spring Integration project. Spring Integration extends the Spring programming model to support the well-known Enterprise Integration Patterns (EIP). EIP defines a number of components that are typically used for orchestration in distributed systems. You have probably heard about patterns such as message channels, routers, aggregators, or endpoints. Let’s proceed to the implementation.

We begin from order-service, that is responsible for accepting orders, publishing them on shared topic and then collecting asynchronous responses from downstream services. Here’s the @Service, which builds message and publishes it to the remote topic using Source bean.

@Service

public class OrderSender {

@Autowired

private Source source;

public boolean send(Order order) {

return this.source.output().send(MessageBuilder.withPayload(order).build());

}

}

That @Service is called by the controller, which exposes the HTTP endpoints for submitting new orders and getting order with status by id.

@RestController

public class OrderController {

private static final Logger LOGGER = LoggerFactory.getLogger(OrderController.class);

private ObjectMapper mapper = new ObjectMapper();

@Autowired

OrderRepository repository;

@Autowired

OrderSender sender;

@PostMapping

public Order process(@RequestBody Order order) throws JsonProcessingException {

Order o = repository.add(order);

LOGGER.info("Order saved: {}", mapper.writeValueAsString(order));

boolean isSent = sender.send(o);

LOGGER.info("Order sent: {}", mapper.writeValueAsString(Collections.singletonMap("isSent", isSent)));

return o;

}

@GetMapping("/{id}")

public Order findById(@PathVariable("id") Long id) {

return repository.findById(id);

}

}

Now, let’s take a closer look on consumer side. The message sent by OrderSender bean from order-service is received by account-service and product-service. To receive the message from topic exchange, we just have to annotate the method that takes the Order object as a parameter with @StreamListener. We also have to define target channel for listener – in that case it is Processor.INPUT.

@SpringBootApplication

@EnableBinding(Processor.class)

public class OrderApplication {

private static final Logger LOGGER = LoggerFactory.getLogger(OrderApplication.class);

@Autowired

OrderService service;

public static void main(String[] args) {

new SpringApplicationBuilder(OrderApplication.class).web(true).run(args);

}

@StreamListener(Processor.INPUT)

public void receiveOrder(Order order) throws JsonProcessingException {

LOGGER.info("Order received: {}", mapper.writeValueAsString(order));

service.process(order);

}

}

Received order is then processed by AccountService bean. Order may be accepted or rejected by account-service dependending on sufficient funds on customer’s account for order’s realization. The response with acceptance status is sent back to order-service via output channel invoked by the OrderSender bean.

@Service

public class AccountService {

private static final Logger LOGGER = LoggerFactory.getLogger(AccountService.class);

private ObjectMapper mapper = new ObjectMapper();

@Autowired

AccountRepository accountRepository;

@Autowired

OrderSender orderSender;

public void process(final Order order) throws JsonProcessingException {

LOGGER.info("Order processed: {}", mapper.writeValueAsString(order));

List accounts = accountRepository.findByCustomer(order.getCustomerId());

Account account = accounts.get(0);

LOGGER.info("Account found: {}", mapper.writeValueAsString(account));

if (order.getPrice() <= account.getBalance()) {

order.setStatus(OrderStatus.ACCEPTED);

account.setBalance(account.getBalance() - order.getPrice());

} else {

order.setStatus(OrderStatus.REJECTED);

}

orderSender.send(order);

LOGGER.info("Order response sent: {}", mapper.writeValueAsString(order));

}

}

The last step is configuration. It is provided inside application.yml file. We have to properly define destinations for channels. While order-service is assigning orders-out destination to output channel, and orders-in destination to input channel, account-service and product-service do the opposite. It is logical, because message sent by order-service via its output destination is received by consuming services via their input destinations. But it is still the same destination on shared broker’s exchange. Here are configuration settings of order-service.

spring:

cloud:

stream:

bindings:

output:

destination: orders-out

input:

destination: orders-in

rabbit:

bindings:

input:

consumer:

exchangeType: direct

Here’s configuration provided for account-service and product-service.

spring:

cloud:

stream:

bindings:

output:

destination: orders-in

input:

destination: orders-out

rabbit:

bindings:

output:

producer:

exchangeType: direct

routingKeyExpression: '"#"'

Finally, you can run our sample microservice. For now, we just need to run a single instance of each microservice. You can easily generate some test requests by running JUnit test class OrderControllerTest provided in my source code repository inside module order-service. This case is simple. In the next we will study more advanced sample with multiple running instances of consuming services.

Scaling up

To scale up our Spring Cloud Stream applications we just need to launch additional instances of each microservice. They will still listen for the incoming messages on the same topic exchange as the currently running instances. After adding one instance of account-service and product-service we may send a test order. The result of that test won’t be satisfactory for us… Why? A single order is received by all the running instances of every microservice. This is exactly how topic exchanges works – the message sent to topic is received by all consumers, which are listening on that topic. Fortunately, Spring Cloud Stream is able to solve that problem by providing solution called consumer group. It is responsible for guarantee that only one of the instances is expected to handle a given message, if they are placed in a competing consumer relationship. The transformation to consumer group mechanism when running multiple instances of the service has been visualized on the following figure.

Configuration of a consumer group mechanism is not very difficult. We just have to set group parameter with name of the group for given destination. Here’s the current binding configuration for account-service. The orders-in destination is a queue created for direct communication with order-service, so only orders-out is grouped using spring.cloud.stream.bindings..group property.

spring:

cloud:

stream:

bindings:

output:

destination: orders-in

input:

destination: orders-out

group: account

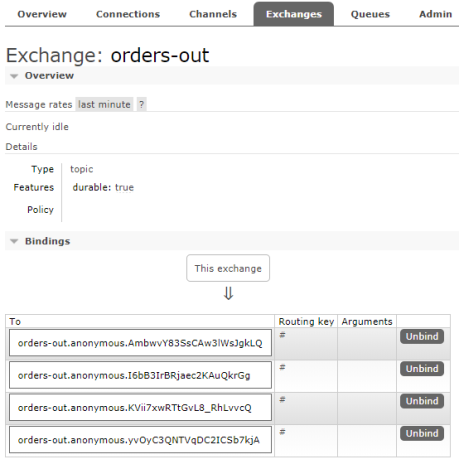

Consumer group mechanisms is a concept taken from Apache Kafka, and implemented in Spring Cloud Stream also for RabbitMQ broker, which does not natively support it. So, I think it is pretty interesting how it is configured on RabbitMQ. If you run two instances of the service without setting group name on destination there are two bindings created for a single exchange (one binding per one instance) as shown in the picture below. Because two applications are listening on that exchange, there four bindings assigned to that exchange in total.

If you set group name for selected destination Spring Cloud Stream will create a single binding for all running instances of given service. The name of binding will be suffixed with group name.

Because, we have included spring-cloud-starter-sleuth to the project dependencies the same traceId header is sent between all the asynchronous requests exchanged during realization of single request incoming to the order-service POST endpoint. Thanks to that we can easily correlate all logs using this header using Elastic Stack (Kibana).

Automated Testing

You can easily test your microservice without connecting to a message broker. To achieve it you need to include spring-cloud-stream-test-support to your project dependencies. It contains the TestSupportBinder bean that lets you interact with the bound channels and inspect any messages sent and received by the application.

<dependency> <groupId>org.springframework.cloud</groupId> <artifactId>spring-cloud-stream-test-support</artifactId> <scope>test</scope> </dependency>

In the test class we need to declare MessageCollector bean, which is responsible for receiving messages retained by TestSupportBinder. Here’s my test class from account-service. Using Processor bean I send test order to input channel. Then MessageCollector receives message that is sent back to order-service via output channel. Test method testAccepted creates order that should be accepted by account-service, while testRejected method sets too high order price that results in rejecting the order.

@RunWith(SpringRunner.class)

@SpringBootTest(webEnvironment = SpringBootTest.WebEnvironment.RANDOM_PORT)

public class OrderReceiverTest {

private static final Logger LOGGER = LoggerFactory.getLogger(OrderReceiverTest.class);

@Autowired

private Processor processor;

@Autowired

private MessageCollector messageCollector;

@Test

@SuppressWarnings("unchecked")

public void testAccepted() {

Order o = new Order();

o.setId(1L);

o.setAccountId(1L);

o.setCustomerId(1L);

o.setPrice(500);

o.setProductIds(Collections.singletonList(2L));

processor.input().send(MessageBuilder.withPayload(o).build());

Message received = (Message) messageCollector.forChannel(processor.output()).poll();

LOGGER.info("Order response received: {}", received.getPayload());

assertNotNull(received.getPayload());

assertEquals(OrderStatus.ACCEPTED, received.getPayload().getStatus());

}

@Test

@SuppressWarnings("unchecked")

public void testRejected() {

Order o = new Order();

o.setId(1L);

o.setAccountId(1L);

o.setCustomerId(1L);

o.setPrice(100000);

o.setProductIds(Collections.singletonList(2L));

processor.input().send(MessageBuilder.withPayload(o).build());

Message received = (Message) messageCollector.forChannel(processor.output()).poll();

LOGGER.info("Order response received: {}", received.getPayload());

assertNotNull(received.getPayload());

assertEquals(OrderStatus.REJECTED, received.getPayload().getStatus());

}

}

Conclusion

Message-driven microservices are a good choice whenever you don’t need synchronous response from your API. In this article I have shown sample use case of publish/subscribe model in inter-service communication between your microservices. The source code is as usual available on GitHub (https://github.com/piomin/sample-message-driven-microservices.git). For more interesting examples with usage of Spring Cloud Stream library, also with Apache Kafka, you can refer to Chapter 11 in my book Mastering Spring Cloud (https://www.packtpub.com/application-development/mastering-spring-cloud).